Launching a course without validation is one of the fastest ways to waste a season of your life. Most creators know the feeling. You sketch the modules, map the bonuses, maybe even record the first lessons, and then a quiet question keeps hanging around in the background.

Does anyone actually want this? Not in a polite survey-answer way. In a pull-out-the-card-and-buy way.

I use ai for market research to answer that before I build too much. Not to replace actual customer conversations, and not to hand over strategy to a chatbot, but to speed up the messy early stage where I’m trying to understand demand, language, objections, and gaps in the market.

That matters because the old way was slow. You had to manually read forums, collect comments, run surveys, compare competitors, and somehow turn all that into a course idea that could sell. AI makes that process much more manageable for a solo creator or a small team.

Stop Guessing and Start Validating with AI

A lot of failed courses don’t fail because the teacher was bad. They fail because the offer was built from assumptions.

I’ve seen creators build a polished program around what they think students need, only to find out the audience wanted a simpler outcome, a narrower promise, or a completely different format. That’s the trap. You can be smart, experienced, and still misread the market.

What changed for me was treating AI like a research assistant. It helps me collect signals faster, sort through language patterns, and surface repeated pain points before I write the course outline. That’s where ai for market research starts paying off. It saves time in the phase where most creators either procrastinate or guess.

The broader adoption trend backs that up. Marketers using generative AI report saving more than 5 hours per week on content creation tasks, and 68% of marketing leaders report positive ROI on their AI investments, according to Sequencr’s roundup of generative AI statistics. Those numbers aren’t course-specific, but they match what I see in practice. The work gets lighter when AI handles the first pass.

Where AI actually helps

For course validation, I use AI in a few narrow ways:

- Surface demand signals: It groups repeated complaints, desired outcomes, and stalled goals from public conversations.

- Compare competitor positioning: It spots what rival courses promise, and what buyers still say is missing.

- Extract voice-of-customer language: It gives me raw phrases I can reuse in headlines, lesson titles, and sales copy.

- Condense messy inputs: Reviews, comments, transcripts, and survey responses become something I can act on.

Practical rule: If AI gives you speed but not clarity, your inputs are probably too vague.

That same principle shows up outside course creation too. If you want another example of how teams are using AI to tighten their research and messaging workflows, this strategic guide on using AI for B2B marketing is a useful read.

My baseline mindset

I don’t ask AI, “Is this a good course idea?”

That question is too broad, and broad prompts usually produce polished nonsense.

I ask narrower questions like these:

- What problems show up repeatedly in discussions about this topic?

- What are buyers disappointed by in existing offers?

- What language do people use when they describe the result they want?

- What does this audience resist, misunderstand, or delay?

Those are research questions. Once you have them, AI becomes useful.

First Ask What You Really Need to Know

The first mistake most creators make is jumping into a tool before they’ve defined the decision they’re trying to make.

If your prompt is fuzzy, the output will be fuzzy too. AI is very good at organizing information. It’s much less helpful when you ask it to make a business judgment without enough context.

Start with decisions, not ideas

Before I research a course topic, I write down the business choices I need to make. Usually they fall into a handful of buckets.

| Decision | What I need to know |

|---|---|

| Offer direction | Which problem is painful enough to build around |

| Audience fit | Who this course is best for, and who it isn’t |

| Positioning | What current offers miss or overcomplicate |

| Messaging | How buyers describe their struggle and desired outcome |

| Monetization | Whether the pain sounds urgent, optional, broad, or premium |

That quick exercise stops me from collecting random information that never turns into action.

The three questions I use most

Most of my validation work comes back to three core questions.

What problem is still unsolved

I’m looking for friction, not interest.

People saying a topic is “important” doesn’t mean they’ll buy help. I want to see where they’re confused, what they’ve already tried, and what keeps failing. That tells me whether a course has room to exist.

A simple way to frame it is this:

- Current pain: What are they struggling with right now?

- Failed attempts: What have they already bought, tested, or abandoned?

- Desired outcome: What result are they trying to reach in plain language?

If you need structure for this stage, a solid training needs assessment template helps turn scattered research into sharper questions.

What people dislike about current options

Competitor analysis gets more useful when you stop copying module lists and start reading complaints.

I care less about what a competitor claims on their sales page and more about what customers say after buying. Did the course feel too advanced? Too basic? Too theoretical? Missing implementation? Weak community? Generic examples?

Those gaps are often where your best positioning lives.

Read negative reviews slowly. Buyers usually tell you exactly what they expected but didn’t get.

What language the market already uses

This part directly affects sales.

If your audience says “I can’t turn my expertise into a clear offer,” and your page says “build a transformational knowledge product ecosystem,” you’ve already made the sale harder. AI helps me collect those real phrases at scale so I can borrow wording that feels native to the audience.

A better prompt setup

Instead of typing a one-line question into ChatGPT, I give the AI a short brief:

- Audience: Who I think this course is for

- Topic: What I’m considering teaching

- Decision: What I’m trying to decide

- Inputs: The type of data I’m about to paste in

- Output format: Themes, objections, quotes, and content gaps

That setup makes the difference between “interesting summary” and “usable research.”

Build Your AI-Powered Listening Engine

Good analysis starts with good raw material.

For course creators, that usually means public conversations. You do not need a giant research budget to find them. You need places where your future students talk when they aren’t being sold to.

Where I collect the best signals

My favorite sources are usually the least polished ones.

- Reddit threads: Great for pain points, failed attempts, and blunt objections

- YouTube comments: Useful for beginner confusion and repeated questions

- Amazon book reviews: Strong source for what people hoped a product would solve

- Course review pages: Helpful for feature gaps and delivery complaints

- Facebook groups and niche communities: Best for current language and recurring frustrations

- My own email replies, DMs, and webinar questions: Often the cleanest signal of buying intent

Each source has a different bias. That’s why I like mixing them.

A creator who only reads YouTube comments may think the market wants quick tips. A creator who only reads paid course reviews may think the market wants depth. Both can be true, but for different segments.

Don’t wait for perfect integrations

This is one of the annoying parts of ai for market research in e-learning. The tools rarely connect as cleanly as you want.

A real issue for educators is that AI can fuse multiple sources, but many creators still struggle with API incompatibilities between their tools. In practice, social listening insights often have to be manually applied to platforms like Kajabi or community tools like Circle.so because of those data silos, as discussed by TGM Research on AI’s impact on market research.

That matches my workflow. I still do some manual transfer.

I’ll collect comments in one place, export survey answers from another, pull community questions from another, and then feed everything into one analysis pass. It isn’t elegant, but it works.

My simple collection workflow

I keep this part boring on purpose.

Create a folder for the idea

One folder per course concept. Inside it, I keep raw comments, reviews, transcripts, screenshots, and notes.Split sources by type

I separate competitor reviews, audience comments, and my own customer feedback so I can compare them later.Keep the original wording

I don’t rewrite comments at this stage. The exact phrasing is part of the research value.Tag recurring themes manually

Before AI touches anything, I mark obvious repeats like pricing confusion, implementation fear, or too-many-options overwhelm.

If you’re also collecting direct feedback through forms, this guide to market research survey software is useful for choosing a setup that doesn’t add extra friction.

Add your own customer inputs

Public data is great. Owned data is better.

If you already run workshops, mini-courses, office hours, or a membership, use that material first. Student surveys, lesson feedback, and support questions usually reveal where people stall. I keep a lightweight system for this, and if you want a practical framework, this guide on how to manage course feedback surveys is worth bookmarking.

A short walkthrough can also help if you’re new to collecting input from scattered channels:

What not to collect

Not every mention is useful.

I ignore generic praise, reposted takes, and trend-chasing comments that don’t describe a real struggle. I also avoid overloading the AI with junk. A smaller set of honest comments beats a bloated file full of noise.

If a comment doesn’t reveal pain, desire, confusion, or resistance, it probably won’t improve your course idea.

Let Your AI Assistant Analyze the Data

A folder full of comments does not help much on its own. The value shows up when the patterns are clear enough to shape a real course offer, a membership tier, or a sales page promise.

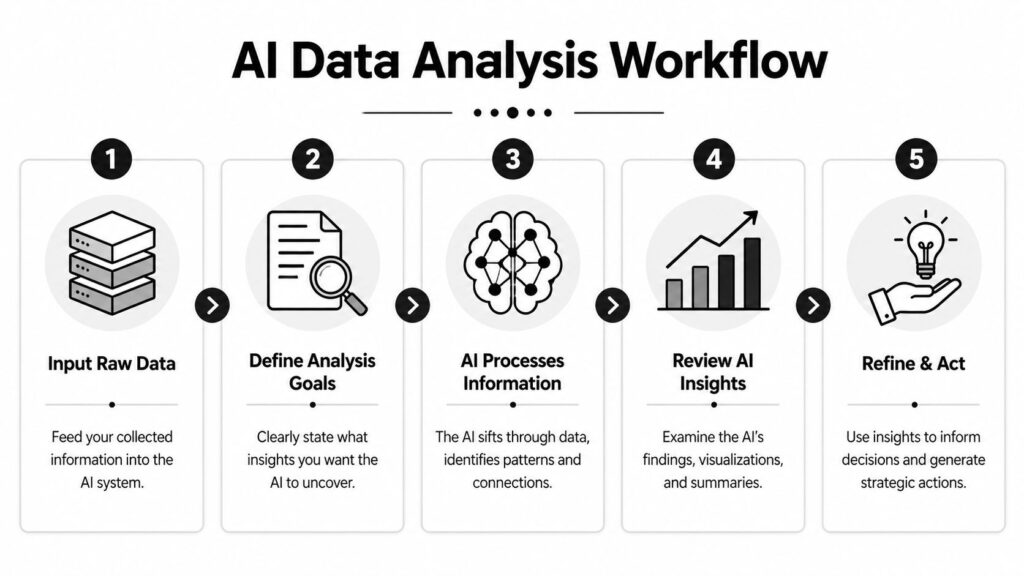

Once I have Reddit threads, review snippets, survey responses, webinar transcripts, and community posts in one place, I run them through an LLM with tight instructions. I do not ask for a grand strategy in one shot. I use AI for sorting, clustering, and surfacing patterns I can verify, then I connect those findings to the way I build inside my LMS and community stack.

The analysis pipeline I use

My workflow is simple enough to repeat every time I test a new course or membership idea.

First, I clean the dataset so the model is not reacting to junk. Then I run separate prompts for separate jobs. After that, I review the output against the raw comments before I make any product decision.

That sounds basic. It saves a lot of bad decisions.

Step one, clean the inputs

I remove duplicates, spam, vague one-liners, and comments that make no sense without missing context. Then I sort the remaining material by source, such as:

- Reddit comments

- Competitor reviews

- Survey responses

- Webinar chat questions

- Community posts

This matters because source affects interpretation. A complaint in a paid community usually means something different from a casual complaint on social media.

If I am validating a course upgrade, I also label the source by product stage. Pre-sale questions, student support tickets, and lesson feedback should not be mixed together without tags. Inside a spreadsheet or Airtable, I usually add columns for source, audience level, offer type, and date. That makes it easier to feed filtered batches into AI and compare results later.

Step two, give the model one job at a time

I do not use one giant prompt for everything.

I run separate passes for pain points, objections, desired outcomes, language patterns, missing support, and buying triggers. Single-purpose prompts produce cleaner output and make review faster. They also map better to the decisions I need to make in my course platform. One prompt helps me outline modules. Another helps me write the course promise. Another helps me decide what belongs in the community instead of the curriculum.

That split is useful for creators with an LMS plus a community tool. If AI finds repeated implementation questions, I may answer those with office hours, a discussion space, or a member Q&A thread instead of adding another lesson to the course.

Step three, require evidence in the output

I want themes with receipts.

So I ask the model to group patterns and include supporting excerpts under each theme. I also ask it to separate direct evidence from interpretation. That one change cuts down a lot of polished nonsense.

A good research prompt asks for themes plus proof, not themes alone.

The prompt templates I reuse

I keep a small prompt library and revise it as I learn where the model tends to drift.

| Research Goal | Prompt Template |

|---|---|

| Identify pain points | “Analyze the dataset below from prospective course buyers. Group recurring pain points into clear themes. For each theme, include representative phrases from the dataset and explain what the person seems to want help with.” |

| Find content gaps | “Review these competitor reviews and comments. Identify what buyers expected but did not receive. Summarize the missing outcomes, missing support, or unclear teaching points.” |

| Extract voice of customer language | “Pull out repeated phrases people use to describe their struggle, desired result, frustration, or hesitation. Keep the original wording where possible. Organize by problem, goal, and objection.” |

| Segment beginner vs advanced needs | “Separate the comments into beginner, intermediate, and advanced concerns based on wording and context. Explain what each group appears to need from a course or membership.” |

| Surface buying resistance | “Read the dataset and identify reasons people delay buying help. Distinguish between practical objections, trust objections, and self-belief issues.” |

If you want another perspective on structuring this work, I liked this piece on analysing market research because it pushes beyond generic summaries and toward decision-ready analysis.

What I trust, and what I check myself

AI is good at pattern recognition across a messy pile of text. It is much less reliable when it starts making strategic jumps, predicting what buyers will do next, or inventing confidence that the source material does not support.

For course creators, that failure usually shows up in expensive ways. You end up adding a module nobody asked for, splitting your audience into segments that are not real, or writing a promise that sounds strong on a sales page but falls apart once students enter the course.

That is why I review every theme before it touches the product. If a pattern cannot survive a quick check against raw comments, it does not go into the outline.

My review pass

Before I use any output, I ask three questions:

- Did this theme appear across multiple sources?

- Did the AI preserve the audience’s real language?

- Would I make a real product decision based on this, or does it just sound smart?

I also do one practical check that matters for monetization. Can this insight change something concrete in the build? A lesson title, a bonus resource, an onboarding email, a community prompt, a pricing page section. If the answer is no, the insight may be interesting but it is not useful yet.

This review step is also where I connect research back to delivery. If the pattern points to structured teaching, it belongs in the course. If it points to accountability or troubleshooting, it may belong in the membership. If it points to confusion before purchase, I fix the sales page or pre-sale email sequence. That is the same thinking I use when planning how to create an online course to sell without stuffing every idea into version one.

The best ai for market research workflow for creators is disciplined. Collect the right inputs, run focused prompts, verify the evidence, and use the findings where they belong in your LMS, your community, and your offer.

Turn AI Insights into a Profitable Course

A course starts making money when the research shows up in the product, the sales page, and the student experience.

When I validate a course idea with AI, I am not trying to collect interesting patterns. I am trying to answer practical build questions. What should go inside the LMS first? What belongs in the community instead of the curriculum? Which promise can I charge for with confidence? That shift matters because creators do not get paid for research. They get paid for a clear outcome that students can see and trust.

Build around the transformation people will pay for

The outline should follow the repeated pain points, not your full knowledge base.

If the research keeps pointing to stalled progress at the same stage, that stage becomes a module. If buyers keep asking for feedback, accountability, or troubleshooting, that may belong in your membership or community layer instead of the core course. I make that call early so the product stays focused and the offer stack makes sense.

My first pass usually looks like this:

| Layer | What goes in it |

|---|---|

| Core transformation | The shortest path from the student’s problem to the promised result |

| Support material | Templates, examples, checklists, office hours replays, implementation guides |

| Retention assets | Community prompts, accountability threads, bonus sessions, progress milestones |

That structure also helps with the tech setup. Core lessons go into the LMS. Ongoing discussion and peer wins go into the community platform. Bonus support can sit behind a membership tier if it helps students keep momentum after the course ends.

Use failed competitor experiences to sharpen the offer

Competitor research becomes useful when it changes your positioning.

I look for frustration patterns in reviews, Reddit threads, YouTube comments, and course testimonials. If buyers keep saying a category is too vague, too advanced, or too heavy on theory, I do not copy the category. I build against the gap. For an e-learning creator, that might mean a tighter beginner path, more worked examples, or a stronger implementation layer inside the course portal.

Those complaints can shape monetization decisions too. A one-time course works well when students want a defined path and quick result. A membership makes more sense when the struggle is ongoing and people need recurring feedback, fresh examples, or community accountability.

Turn customer language into sales assets

Good research shortens the distance between what students say and what your offer says back.

I use voice-of-customer notes to name modules, write landing page sections, draft webinar titles, and structure onboarding emails. The best phrases are usually simple. They describe a stuck point, a desired outcome, or a fear about wasting time and money again.

That language improves more than the sales page. It helps you decide what appears in the course dashboard, what gets pinned in the community, and what should trigger an automated email inside your stack. If students keep worrying about falling behind, add progress checkpoints in the LMS and send reminder emails tied to lesson completion. If they worry about implementation, create discussion prompts and office hour invites inside the community.

If you are shaping the full product around this research, this guide on how to create an online course to sell pairs well with the validation process.

Use AI to draft. Keep product decisions human.

AI is helpful at turning messy inputs into a first outline. It is not qualified to decide what earns a place in your paid curriculum.

I have seen AI-generated course structures that look polished and still miss the buying trigger. They add nice-sounding lessons, drift into adjacent topics, and make version one harder to finish. That hurts sales and student completion. A larger course often looks more valuable at first glance, but a tighter course usually performs better because the promise is clearer and the path is easier to follow.

My workflow is simple:

- Build the outline from repeated pain points and desired outcomes

- Map each module to a stage in the student journey

- Assign delivery format by job. LMS lesson, worksheet, live session, community discussion, or membership bonus

- Ask AI to find gaps, overlaps, and confusing jumps

- Remove anything that does not support the core transformation

- Add support assets only where they improve completion or confidence

That process gives me a course I can price, package, and deliver without stuffing every idea into version one.

Your Ethical and Human-Powered Advantage

AI can speed up research. It cannot care about your students.

That still belongs to you. And in course creation, that difference matters more than people think. A model can cluster responses and summarize patterns, but it doesn’t understand the emotional weight behind a stalled career change, a failed launch, or a buyer who’s embarrassed they’ve already paid for three courses that didn’t help.

The risks are real

There are two issues I take seriously.

One is algorithmic bias, which comes from uneven training data. The other is bad input data. Monigle notes that fraudulent respondents can make up up to 50% of some online survey panels, and when AI models learn from that kind of data, the output can drift far from reality in their article on AI in market research and synthetic data.

That’s why I prefer using public conversations, my own audience feedback, and carefully reviewed survey responses rather than blindly trusting generic datasets.

What human review catches

Human review matters most in places where nuance changes the meaning.

A creator can spot when a complaint is really about confidence, not curriculum. A good teacher can tell when buyers say they want “more content” but instead need a clearer path. AI tends to flatten those distinctions unless the input is excellent and the review is careful.

Use AI to compress the reading. Use human judgment to interpret the stakes.

The edge that still wins

The practical advantage isn’t having more tools than everyone else.

It’s being able to combine fast analysis with lived expertise. If you know your subject, know your learners, and use ai for market research to listen better, you can build offers that feel grounded instead of generic. That’s hard to fake.

And that’s usually what buyers respond to. They don’t want the most “AI-powered” course. They want a course that solves a real problem in a way that feels trustworthy.